通过爬虫分析号码吉凶测试是否靠谱

python备忘

今天忽然发现网上有qq号码,以及手机号码吉凶测试,颇具玩味。而且下面留言也能看出,挺火爆,有相当一部分群体挺信这一套,而且重金求好号。

挺新鲜,总所周知,这个吉凶类的应该属于玄学范畴,而计算机却是科学的范畴,两个结合在一起做的有声有色的,还真是让人大跌眼镜。

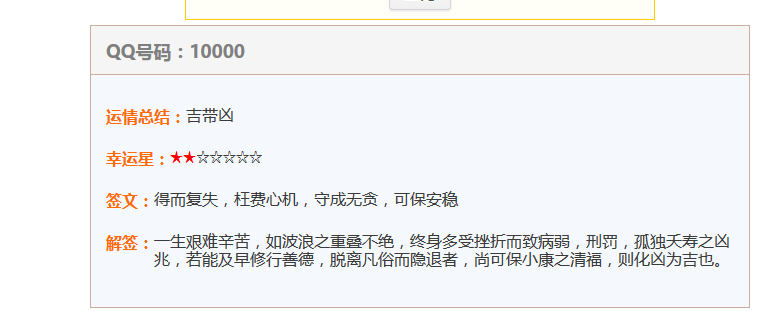

我忍不住测试了一下我的qq号码,好像还很不错的样子

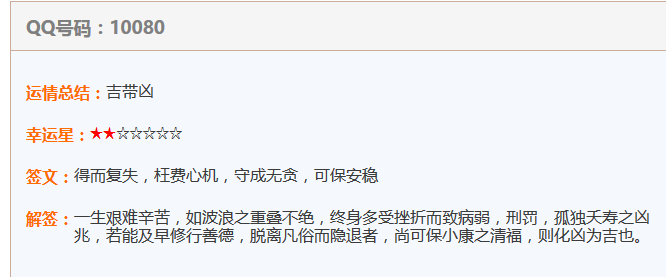

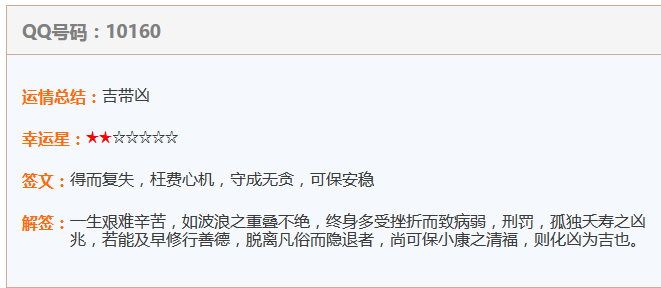

在两个网站上测试,发现测出来的结果竟然一模一样,难道这个东西也有一些约定俗成的东西在里面,或者存在一个虚无缥缈的公式?

忍不住打开scrapy,抓取了其中10000条数据进行分析。上一下代码

item.py

# -*- coding: utf-8 -*- # Define here the models for your scraped items # # See documentation in: # http://doc.scrapy.org/en/latest/topics/items.html import scrapy class QqItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() result = scrapy.Field() star = scrapy.Field() qianwen = scrapy.Field() jieqian = scrapy.Field() url = scrapy.Field() pass

qqSpider.py

# -*- coding: utf-8 -*-

import scrapy

import re

from scrapy.http import Request

from scrapy.selector import Selector

from qq.items import QqItem

class QqspiderSpider(scrapy.Spider):

name = "qqSpider"

allowed_domains = ["link114.cn"]

main_url = "http://qq.link114.cn"

def start_requests(self):

urls = []

for i in xrange(10000,20000):

url = self.main_url +"/"+ str(i)

yield Request(url, self.parse)

def parse(self, response):

sel = Selector(response)

item = QqItem()

item['result'] = sel.xpath('//*[@id="main"]/div[2]/dl[1]/dd/text()').extract()[0]

try:

star = sel.xpath('//*[@id="main"]/div[2]/dl[2]/dd/font/text()').extract()[0]

item['star'] = len(star)

except Exception, e:

item['star'] = 0

item['qianwen'] = sel.xpath('//*[@id="main"]/div[2]/dl[3]/dd/text()').extract()[0]

item['jieqian'] = sel.xpath('//*[@id="main"]/div[2]/dl[4]/dd/text()').extract()[0]

item['url'] = response.url

yield(item)pipelines.py

# -*- coding: utf-8 -*-

from .sql import Sql

from qq.items import QqItem

class QqPipeline(object):

def process_item(self,item,spider):

if isinstance(item,QqItem):

url = item['url']

result = item['result']

star = item['star']

qianwen = item['qianwen']

jieqian = item['jieqian']

ret = Sql.select_name(url)

if ret[0] == 1:

print('your data is already exist')

pass

else:

url = item['url']

Sql.insert_dd_name(url,result,star,qianwen,jieqian)

print('you data is stroge success')sql.py

# -*- coding: utf-8 -*-

import mysql.connector

from qq import settings

MYSQL_HOSTS = settings.MYSQL_HOSTS

MYSQL_USER = settings.MYSQL_USER

MYSQL_PASSWORD = settings.MYSQL_PASSWORD

MYSQL_PORT = settings.MYSQL_PORT

MYSQL_DB = settings.MYSQL_DB

cnx = mysql.connector.connect(user=MYSQL_USER,password=MYSQL_PASSWORD,host=MYSQL_HOSTS,database=MYSQL_DB)

cur = cnx.cursor(buffered=True)

class Sql:

@classmethod

def insert_dd_name(cls,url,result,star,qianwen,jieqian):

sql = 'INSERT INTO lzh_qq (`url`,`result`,`star`,`qianwen`,`jieqian`) VALUES(%(url)s,%(result)s,%(star)s0,%(qianwen)s,%(jieqian)s)'

value = {

'url':url,

'result':result,

'star':star,

'qianwen':qianwen,

'jieqian':jieqian

}

cur.execute(sql,value)

cnx.commit()

@classmethod

def select_name(cls,url):

sql = "SELECT EXISTS(SELECT 1 FROM lzh_qq WHERE url=%(url)s)"

value = {

'url' : url

}

cur.execute(sql,value)

return cur.fetchall()[0]settings.py

# -*- coding: utf-8 -*-

# Scrapy settings for qq project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# http://doc.scrapy.org/en/latest/topics/settings.html

# http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

# http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html

BOT_NAME = 'qq'

SPIDER_MODULES = ['qq.spiders']

NEWSPIDER_MODULE = 'qq.spiders'

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'qq (+http://www.yourdomain.com)'

# Obey robots.txt rules

ROBOTSTXT_OBEY = True

# Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See http://scrapy.readthedocs.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

#COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#}

# Enable or disable spider middlewares

# See http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'qq.middlewares.QqSpiderMiddleware': 543,

#}

# Enable or disable downloader middlewares

# See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

#DOWNLOADER_MIDDLEWARES = {

# 'qq.middlewares.MyCustomDownloaderMiddleware': 543,

#}

# Enable or disable extensions

# See http://scrapy.readthedocs.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#}

# Configure item pipelines

# See http://scrapy.readthedocs.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

#'qq.pipelines.SomePipeline': 300,

'qq.mysqlpipelines.pipelines.QqPipeline': 1,

}

# Enable and configure the AutoThrottle extension (disabled by default)

# See http://doc.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

MYSQL_HOSTS = '127.0.0.1'

MYSQL_USER = 'root'

MYSQL_PASSWORD = '123456'

MYSQL_PORT = '3306'

MYSQL_DB = 'qq'本文章不具有任何教学目的,纯属备忘

当数据采集以后,分析得出,他们应该只有80条结果,每80一个循环,比如说10000 和 10080,10160结果是一模一样的,如图

手机测吉凶同理。

结论:真是太儿戏了